| Version 11 (modified by , 10 years ago) ( diff ) |

|---|

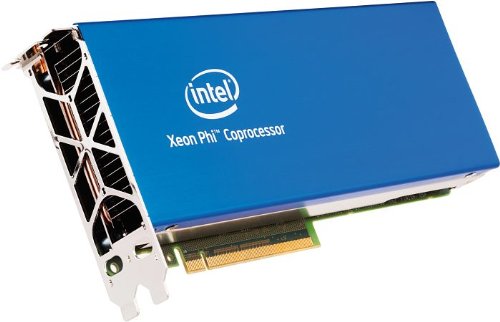

Programming for the Xeon Phi Coprocessor on Cypress

The Xeon Phi coprocessor is an accelerator used to provide many cores to parallel applications. While it has fewer threads available than a typical GPU accelerator, the processors are much "smarter" and the programming paradigm is similar to what you experience coding for CPUs.

Xeon Phi Coprocessor Hardware

Each compute node of Cypress is equipped with two (2) Xeon Phi 7120P coprocessors

The 7120p is equipped with

- 61 physical x86 cores running at 1.238 GHz

- Four (4) Hardware threads on each core

- 16GB GDDR5 memory

- Uniquely wide SIMD capabilities via 512-bit wide vectors (16 doubles!)

- Unique IMCI instruction set

- Connected via PCIe Bus

- Fully coherent L1 and L2 cache

All this adds up to about 2TFLOP/s (1TFLOG/s double precission) of potential computing power.

Each Xeon Phi can be regarded as it's own small machine (cluster really) running a stripped down version of linux. We can ssh onto them, we can run code on them, and we can treat them as another asset to be recruited into our MPI executions.

What Do I Call It?

The 7120p is referred to by many names, all of them correct

- The Phi

- The coprocessor

- The Xeon Phi

- The MIC (pronounced both Mic as in Jagger and Mike) which stands for Many Integrated Cores

- Knights Landing (current gen)

- Knights Hill (next gen)

You'll typically hear us call it either the MIC or the Phi.

Xeon Phi Usage Models

The intel suite provides parallel instantiations and compilers that support three distinct programming models:

- Automatic Offloading (AO) - the intel MKL library sends certain calculations to the Phi without any user input.

- Native Programming - Code is compiled to run on the Xeon Phi Coprocessor and ONLY on the Xeon Phi Coprocessor.

- Offloading - Certain Parallel sections of your source code are identified for offloading to the coprocessor. This provides the greatest amount of control and allows for the CPUs and coprocessors to work in tandem.

Automatic Offloading

Native Programming

Offloading

Programming Considerations

The number one thing to keep in mind is that all data traffic to and from the coprocessors must travel over PCIE. This is a relatively slow connection when compared to memory and the more you can minimize this communication, the faster you code will run.

Future Training

We've only scratched the surface on the potential of the Xeon Phi coprocessor. If you are interested in learning more, Colfax International will be giving two days of instruction on coding for the Xeon Phi at Tulane at the end of September. Interested parties can register at

CDT 101: http://events.r20.constantcontact.com/register/event?oeidk=a07eayq4gvha16a1237&llr=kpiwi7pab

CDT 102: http://events.r20.constantcontact.com/register/event?oeidk=a07eayqb5mwf5397895&llr=kpiwi7pab

Attachments (1)

-

xeonPhi.jpg

(23.4 KB

) - added by 10 years ago.

Xeon Phi 7120p coprocessor

Download all attachments as: .zip