Programming for the Xeon Phi Coprocessor on Cypress

Workshop Reminder

To take advantage of the workshop QOS:

export MY_PARTITION=workshop export MY_QUEUE=workshop idev -c 4 --gres=mic:0

To follow along, you can copy the source code and submission scripts

cp -r /lustre/project/workshop/PhiExamples/ .

The Xeon Phi coprocessor is an accelerator used to provide many cores to parallel applications. While it has fewer threads available than a typical GPU accelerator, the processors are much "smarter" and the programming paradigm is similar to what you experience coding for CPUs.

Xeon Phi Coprocessor Hardware

Each compute node of Cypress is equipped with two (2) Xeon Phi 7120P coprocessors

The 7120p is equipped with

- 61 physical x86 cores running at 1.238 GHz

- Four (4) Hardware threads on each core

- 16GB GDDR5 memory

- Uniquely wide SIMD capabilities via 512-bit wide vectors (16 doubles!)

- Unique IMCI instruction set

- Connected via PCIe Bus

- Fully coherent L1 and L2 cache

All this adds up to about 2TFLOP/s (1TFLOG/s double precission) of potential computing power.

Each Xeon Phi can be regarded as it's own small machine (cluster really) running a stripped down version of linux. We can ssh onto them, we can run code on them, and we can treat them as another asset to be recruited into our MPI executions.

What Do I Call It?

The 7120p is referred to by many names, all of them correct

- The Phi

- The coprocessor

- The Xeon Phi

- The MIC (pronounced both Mic as in Jagger and Mike) which stands for Many Integrated Cores

- Knights Landing (current gen)

- Knights Hill (next gen)

You'll typically hear us call the 7120p either the MIC or the Phi. This is to help distinguish it from the Xeon E5 processors which we'll refer to as the host.

Xeon Phi Usage Models

The intel suite provides parallel instantiations and compilers that support three distinct programming models:

- Automatic Offloading (AO) - the intel MKL library sends certain calculations to the Phi without any user input.

- Native Programming - Code is compiled to run on the Xeon Phi Coprocessor and ONLY on the Xeon Phi Coprocessor.

- Offloading - Certain Parallel sections of your source code are identified for offloading to the coprocessor. This provides the greatest amount of control and allows for the CPUs and coprocessors to work in tandem.

Automatic Offloading

Eligibility

As we saw yesterday during our Matlab tutorial, any program/code that makes use of the Intel MKL library may take advantage of Automatic Offloading (AO) to the MIC. However, not every MKL routine will automatically offload. The Routines that are eligible for AO are:

- BLAS:

- BLAS level-3 subroutines - ?SYMM,?TRMM, ?TRSM, ?GEMM

- LAPACK:

- LU (?GETRF), Cholesky ((S/D)POTRF), and QR (?GEQRF) factorization functions

However, AO will only kick in if MKL deems the problem to be of sufficient size (i.e. the increase in parallelism will outweigh the increase in overhead). For instance, SGEMM will use AO only if the matrix size exceeds 2048x2048. For more information on which routines are eligible for AO see the white paper MKL Automatic Offload enabled functions for Intel Xeon Phi coprocessors

Enabling Offloading

To enable AO on Cypress you must

- Load the Intel Parallel Studio XE module

- Turn on MKL AO by setting the environment variable MKL_MIC_ENABLE to 1 (0 or nothing will turn off MKL AO)

- (OPTIONAL) Turn on offload reporting to track your use of the MIC by setting OFFLOAD_REPORT to either 1 or 2. Setting OFFLOAD_REPORT to 2 adds more detail than 1 and will give you information on data transfers.

[tulaneID@cypress1]$ module load intel-psxe [tulaneID@cypress1]$ export MKL_MIC_ENABLE=1 [tulaneID@cypress1]$ export OFFLOAD_REPORT=2

Example using SGEMM

Let's do a small example using SGEMM to test the behavior of MLK AO

/* System headers */ #include <stdio.h> #include <stdlib.h> #include <malloc.h> #include <stdint.h> #include "mkl.h" // dtime // // returns the current wall clock time // double dtime() { double tseconds = 0.0; struct timeval mytime; gettimeofday(&mytime,(struct timezone*)0); tseconds = (double)(mytime.tv_sec + mytime.tv_usec*1.0e-6); return( tseconds ); } int main(int argc, char **argv) { float *A, *B, *C; /* Matrices */ double workdivision; double tstart, tstop, ttime; MKL_INT N = 2560; /* Matrix dimensions */ MKL_INT LD = N; /* Leading dimension */ int matrix_bytes; /* Matrix size in bytes */ int matrix_elements; /* Matrix size in elements */ float alpha = 1.0, beta = 1.0; /* Scaling factors */ char transa = 'N', transb = 'N'; /* Transposition options */ int i, j; /* Counters */ matrix_elements = N * N; matrix_bytes = sizeof(float) * matrix_elements; /* Allocate the matrices */ A = malloc(matrix_bytes); B = malloc(matrix_bytes); C = malloc(matrix_bytes); /* Initialize the matrices */ for (i = 0; i < matrix_elements; i++) { A[i] = 1.0; B[i] = 2.0; C[i] = 0.0; } tstart = dtime(); sgemm(&transa, &transb, &N, &N, &N, &alpha, A, &N, B, &N, &beta, C, &N); tstop = dtime(); /* Free the matrix memory */ free(A); free(B); free(C); // elasped time ttime = tstop - tstart; // // Print the results // if ((ttime) > 0.0) { printf("Time spent on SGEMM = %10.3lf\n",ttime); } printf("Done\n"); return 0; }

To test MKL AO

- Get onto a compute node using idev

[tuhpc002@cypress1 Day2]$ export MY_PARTITION=workshop [tuhpc002@cypress1 Day2]$ export MY_QUEUE=workshop [tuhpc002@cypress1 Day2]$ idev -c 4 --gres=mic:0 Requesting 1 node(s) task(s) to workshop queue of workshop partition 1 task(s)/node, 4 cpu(s)/task, 2 MIC device(s)/node Time: 0 (hr) 60 (min). Submitted batch job 54982 JOBID=54982 begin on cypress01-089 --> Creating interactive terminal session (login) on node cypress01-089. --> You have 0 (hr) 60 (min). Last login: Fri Aug 21 07:16:58 2015 from cypress1.cm.cluster [tuhpc002@cypress01-089 Day2]$

Note: We will be sharing MICs so expect some resource conflicts

- Load the Intel module containing MKL and set your environment variables

[tuhpc002@cypress01-089 Day2]$ module load intel-psxe [tuhpc002@cypress01-089 Day2]$ export MKL_MIC_ENABLE=0 [tuhpc002@cypress01-089 Day2]$ export OFFLOAD_REPORT=2

Notice that automatic offloading is turned OFF. This will set our baseline.

- Compile the example code being sure to link to the MKL library

- Run the executable

- Turn on MKL AO and run it again

[tuhpc002@cypress01-089 Day2]$ icc -O3 -mkl -openmp sgemm_example.c -o AOtest [tuhpc002@cypress01-089 Day2]$ ./AOtest Time spent on SGEMM = 0.835 Done [tuhpc002@cypress01-089 Day2]$ export MKL_MIC_ENABLE=1 [tuhpc002@cypress01-089 Day2]$ ./AOtest [MKL] [MIC --] [AO Function] SGEMM [MKL] [MIC --] [AO SGEMM Workdivision] 0.60 0.20 0.20 [MKL] [MIC 00] [AO SGEMM CPU Time] 2.858848 seconds [MKL] [MIC 00] [AO SGEMM MIC Time] 0.104307 seconds [MKL] [MIC 00] [AO SGEMM CPU->MIC Data] 31457280 bytes [MKL] [MIC 00] [AO SGEMM MIC->CPU Data] 5242880 bytes [MKL] [MIC 01] [AO SGEMM CPU Time] 2.858848 seconds [MKL] [MIC 01] [AO SGEMM MIC Time] 0.113478 seconds [MKL] [MIC 01] [AO SGEMM CPU->MIC Data] 31457280 bytes [MKL] [MIC 01] [AO SGEMM MIC->CPU Data] 5242880 bytes Time spent on SGEMM = 3.436 Done [tuhpc002@cypress01-089 Day2]$

The Point: This example gets at some of the challenges of coding for the Xeon Phi. Utilization is simple, but optimization can be a real challenge. Let's look at a few more options we can manipulate through environment variables:

- The work division among the Host and MICs can also be tuned by hand using MKL_MIC_<0,1>_WORKDIVISION

[tuhpc002@cypress01-089 Day2]$ export MKL_MIC_0_WORKDIVISION=1.0 [tuhpc002@cypress01-089 Day2]$ ./AOtest [MKL] [MIC --] [AO Function] SGEMM [MKL] [MIC --] [AO SGEMM Workdivision] 0.00 1.00 0.00 [MKL] [MIC 00] [AO SGEMM CPU Time] 2.831957 seconds [MKL] [MIC 00] [AO SGEMM MIC Time] 0.141694 seconds [MKL] [MIC 00] [AO SGEMM CPU->MIC Data] 52428800 bytes [MKL] [MIC 00] [AO SGEMM MIC->CPU Data] 26214400 bytes [MKL] [MIC 01] [AO SGEMM CPU Time] 2.831957 seconds [MKL] [MIC 01] [AO SGEMM MIC Time] 0.000000 seconds [MKL] [MIC 01] [AO SGEMM CPU->MIC Data] 0 bytes [MKL] [MIC 01] [AO SGEMM MIC->CPU Data] 0 bytes Time spent on SGEMM = 3.394

- The number of threads used on each MIC can be controlled using MIC_OMP_NUMTHREADS

[tuhpc002@cypress01-089 Day2]$ export MIC_OMP_NUMTHREADS=122 [tuhpc002@cypress01-089 Day2]$ ./AOtest [MKL] [MIC --] [AO Function] SGEMM [MKL] [MIC --] [AO SGEMM Workdivision] 0.60 0.20 0.20 [MKL] [MIC 00] [AO SGEMM CPU Time] 1.625511 seconds [MKL] [MIC 00] [AO SGEMM MIC Time] 0.102266 seconds [MKL] [MIC 00] [AO SGEMM CPU->MIC Data] 31457280 bytes [MKL] [MIC 00] [AO SGEMM MIC->CPU Data] 5242880 bytes [MKL] [MIC 01] [AO SGEMM CPU Time] 1.625511 seconds [MKL] [MIC 01] [AO SGEMM MIC Time] 0.089364 seconds [MKL] [MIC 01] [AO SGEMM CPU->MIC Data] 31457280 bytes [MKL] [MIC 01] [AO SGEMM MIC->CPU Data] 5242880 bytes Time spent on SGEMM = 2.288 Done [tuhpc002@cypress01-089 Day2]$

- We can control the distribution of threads using MIC_KMP_AFFINITY

[tuhpc002@cypress01-089 Day2]$ export MIC_KMP_AFFINITY=scatter [tuhpc002@cypress01-089 Day2]$ ./AOtest [MKL] [MIC --] [AO Function] SGEMM [MKL] [MIC --] [AO SGEMM Workdivision] 0.60 0.20 0.20 [MKL] [MIC 00] [AO SGEMM CPU Time] 1.631954 seconds [MKL] [MIC 00] [AO SGEMM MIC Time] 0.101270 seconds [MKL] [MIC 00] [AO SGEMM CPU->MIC Data] 31457280 bytes [MKL] [MIC 00] [AO SGEMM MIC->CPU Data] 5242880 bytes [MKL] [MIC 01] [AO SGEMM CPU Time] 1.631954 seconds [MKL] [MIC 01] [AO SGEMM MIC Time] 0.105702 seconds [MKL] [MIC 01] [AO SGEMM CPU->MIC Data] 31457280 bytes [MKL] [MIC 01] [AO SGEMM MIC->CPU Data] 5242880 bytes Time spent on SGEMM = 2.028 Done [tuhpc002@cypress01-089 Day2]$

Native Programming

The native model centers around the notion that each MIC is its own machine with it's own architecture. The first challenge is to compile code to run specifically on the hardware of the MIC.

Let's start out in interactive mode and then we will look at job submission:

export MY_PARTITION=workshop export MY_QUEUE=workshop idev -c 4 --gres=mic:0

- Load the intel modules

- intel-psxe

- intel/mic/sdk/3.3

- intel/mic/runtime/3.3

And let's examine the code helloflops3.c from Jim Jeffers and James Rainers seminal text Intels Xeon Phi Coprocessor High - Performance Programming

// // // helloflops2 // // A simple example that gets lots of Flops (Floating Point Operations) on // Intel(r) Xeon Phi(tm) co-processors using openmp to scale // #include <stdio.h> #include <stdlib.h> #include <string.h> #include <omp.h> #include <sys/time.h> // dtime // // returns the current wall clock time // double dtime() { double tseconds = 0.0; struct timeval mytime; gettimeofday(&mytime,(struct timezone*)0); tseconds = (double)(mytime.tv_sec + mytime.tv_usec*1.0e-6); return( tseconds ); } #define FLOPS_ARRAY_SIZE (1024*1024) #define MAXFLOPS_ITERS 100000000 #define LOOP_COUNT 128 // number of float pt ops per calculation #define FLOPSPERCALC 2 // define some arrays - // make sure they are 64 byte aligned // for best cache access float fa[FLOPS_ARRAY_SIZE] __attribute__((aligned(64))); float fb[FLOPS_ARRAY_SIZE] __attribute__((aligned(64))); // // Main program - pedal to the metal...calculate using tons o'flops! // int main(int argc, char *argv[] ) { int i,j,k; int numthreads; double tstart, tstop, ttime; double gflops = 0.0; float a=1.1; // // initialize the compute arrays // // #pragma omp parallel #pragma omp master numthreads = omp_get_num_threads(); printf("Initializing\r\n"); #pragma omp parallel for for(i=0; i<FLOPS_ARRAY_SIZE; i++) { fa[i] = (float)i + 0.1; fb[i] = (float)i + 0.2; } printf("Starting Compute on %d threads\r\n",numthreads); tstart = dtime(); // scale the calculation across threads requested // need to set environment variables OMP_NUM_THREADS and KMP_AFFINITY #pragma omp parallel for private(j,k) for (i=0; i<numthreads; i++) { // each thread will work it's own array section // calc offset into the right section int offset = i*LOOP_COUNT; // loop many times to get lots of calculations for(j=0; j<MAXFLOPS_ITERS; j++) { // scale 1st array and add in the 2nd array for(k=0; k<LOOP_COUNT; k++) { fa[k+offset] = a * fa[k+offset] + fb[k+offset]; } } } tstop = dtime(); // # of gigaflops we just calculated gflops = (double)( 1.0e-9*numthreads*LOOP_COUNT* MAXFLOPS_ITERS*FLOPSPERCALC); //elasped time ttime = tstop - tstart; // // Print the results // if ((ttime) > 0.0) { printf("GFlops = %10.3lf, Secs = %10.3lf, GFlops per sec = %10.3lf\r\n", gflops, ttime, gflops/ttime); } return( 0 ); }

Lets begin by compiling the code for the host and running it on the host processor

[tuhpc002@cypress01-089 Day2]$ icc -O3 -openmp helloflops3.c -o helloflops3_host [tuhpc002@cypress01-089 Day2]$ ./helloflops3_host Initializing Starting Compute on 20 threads GFlops = 512.000, Secs = 6.349, GFlops per sec = 80.645 [tuhpc002@cypress01-089 Day2]$

Not bad. Now lets compile a native version by adding the architecture flag -mmic.

[tuhpc002@cypress01-089 Day2]$ icc -O3 -openmp -mmic helloflops3.c -o helloflops3_mic [tuhpc002@cypress01-089 Day2]$ ./helloflops3_mic -bash: ./helloflops3_mic: cannot execute binary file [tuhpc002@cypress01-089 Day2]$

We could not execute our binary because it we tried to run it on the host architecture. We could ssh onto one of the MICs and manually set our library environment variables to run the code, but a cleaner method (and the recommended method) is to use the intel program micnativeloadex.

[tuhpc002@cypress01-089 Day2]$ micnativeloadex ./helloflops3_mic Initializing Starting Compute on 240 threads GFlops = 6144.000, Secs = 2.630, GFlops per sec = 2335.925 [tuhpc002@cypress01-089 Day2]$

micnativeloadex has a number of options which can be seen using the -h or help flag.

[tulaneID@cypress01 $ micnativeloadex -h

Usage:

micnativeloadex [ -h | -V ] AppName -l -t timeout -p -v -d coprocessor -a "args" -e "environment"

-a "args" An optional string of command line arguments to pass to

the remote app.

-d The (zero based) index of the Intel(R) Xeon Phi(TM) coprocessor to run the app on.

-e "environment" An optional environment string to pass to the remote app.

Multiple environment variable may be specified using spaces as separators:

-e "LD_LIBRARY_PATH=/lib64/ DEBUG=1"

-h Print this help message

-l Do not execute the binary on the coprocessor. Instead, list the shared library

dependency information.

-p Disable console proxy.

-t Time to wait for the remote app to finish (in seconds). After the timeout

is reached the remote app will be terminated.

-v Enable verbose mode. Note that verbose output will be displayed

if the remote app terminates abnormally.

-V Show version and build information

Notice that we can use the -d flag to select which MIC we want to run on and the -e flag to set environment variables on the MIC (separated by whitespace). For example, we can choose to run on MIC0 and set the number of threads and their affinity with

[tuhpc002@cypress01-089 Day2]$ micnativeloadex ./helloflops3_mic -e "OMP_NUM_THREADS=120 KMP_AFFINITY=scatter" -d 0 Initializing Starting Compute on 120 threads GFlops = 3072.000, Secs = 1.500, GFlops per sec = 2048.143 [tuhpc002@cypress01-089 Day2]$

We've been using idev as an instructional tool, but we won't normally be running our MIC native jobs interactively. Rather, we'll be submitting jobs that we want to run in native mode on a compute node. An example SLURM jobscript script for our code would look like

#!/bin/bash #SBATCH --qos=workshop # Quality of Service #SBATCH --partition=workshop #Partition #SBATCH --job-name=nativeTest # Job Name #SBATCH --time=00:10:00 # WallTime #SBATCH --nodes=1 # Number of Nodes #SBATCH --ntasks-per-node=1 # Number of tasks (MPI presseces) #SBATCH --cpus-per-task=1 # Number of processors per task OpenMP threads() #SBATCH --gres=mic:1 # Number of Co-Processors module load intel-psxe/2015-update1 module load intel/mic/sdk/3.3 module load intel/mic/runtime/3.3 micnativeloadex ./helloflops3_mic -e "OMP_NUM_THREADS=120 KMP_AFFINITY=scatter" -d 0

Offloading

Offloading allows us to designate specific sections of our code that we wish to have executed on the MIC. Unlike the first two methods, this requires (minimal) alteration of the source code. Lets take our helloflop3.c example and modify it so that it offloads the area of heavy computation

// // // helloflops3offl // // A simple example that gets lots of Flops (Floating Point Operations) on // Intel(r) Xeon Phi(tm) co-processors using offload plus openmp to scale // #include <stdio.h> #include <stdlib.h> #include <string.h> #include <omp.h> #include <sys/time.h> // dtime // // returns the current wall clock time // double dtime() { double tseconds = 0.0; struct timeval mytime; gettimeofday(&mytime,(struct timezone*)0); tseconds = (double)(mytime.tv_sec + mytime.tv_usec*1.0e-6); return( tseconds ); } #define FLOPS_ARRAY_SIZE (1024*512) #define MAXFLOPS_ITERS 100000000 #define LOOP_COUNT 128 // number of float pt ops per calculation #define FLOPSPERCALC 2 // define some arrays - // make sure they are 64 byte aligned // for best cache access __declspec ( target (mic)) float fa[FLOPS_ARRAY_SIZE] __attribute__((aligned(64))); __declspec ( target (mic)) float fb[FLOPS_ARRAY_SIZE] __attribute__((aligned(64))); // // Main program - pedal to the metal...calculate using tons o'flops! // int main(int argc, char *argv[] ) { int i,j,k; int numthreads; double tstart, tstop, ttime; double gflops = 0.0; float a=1.1; // // initialize the compute arrays // // #pragma offload target (mic) #pragma omp parallel #pragma omp master numthreads = omp_get_num_threads(); printf("Initializing\r\n"); #pragma omp parallel for for(i=0; i<FLOPS_ARRAY_SIZE; i++) { fa[i] = (float)i + 0.1; fb[i] = (float)i + 0.2; } printf("Starting Compute on %d threads\r\n",numthreads); tstart = dtime(); // scale the calculation across threads requested // need to set environment variables OMP_NUM_THREADS and KMP_AFFINITY #pragma offload target (mic) #pragma omp parallel for private(j,k) for (i=0; i<numthreads; i++) { // each thread will work it's own array section // calc offset into the right section int offset = i*LOOP_COUNT; // loop many times to get lots of calculations for(j=0; j<MAXFLOPS_ITERS; j++) { // scale 1st array and add in the 2nd array #pragma vector aligned for(k=0; k<LOOP_COUNT; k++) { fa[k+offset] = a * fa[k+offset] + fb[k+offset]; } } } tstop = dtime(); // # of gigaflops we just calculated gflops = (double)( 1.0e-9*numthreads*LOOP_COUNT* MAXFLOPS_ITERS*FLOPSPERCALC); //elasped time ttime = tstop - tstart; // // Print the results // if ((ttime) > 0.0) { printf("GFlops = %10.3lf, Secs = %10.3lf, GFlops per sec = %10.3lf\r\n", gflops, ttime, gflops/ttime); } return( 0 ); }

Changes to take note of:

- The addition of the directive before the section of code that we wish to run on the MIC

#pragma offload target (mic) - The alteration of our array declarations indicating they would be part of offload use, eg

__declspec ( target (mic)) float fa[FLOPS_ARRAY_SIZE] __attribute__((aligned(64)));

Lets compile our new code

icc -openmp -O3 helloflops3offload.c -o helloflops3offload

And let's take a look at a submission script for our offloading example

#!/bin/bash #SBATCH --qos=workshop # Quality of Service #SBATCH --partition=workshop #Partition #SBATCH --job-name=offloadTest # Job Name #SBATCH --time=00:10:00 # WallTime #SBATCH --nodes=1 # Number of Nodes #SBATCH --ntasks-per-node=1 # Number of tasks (MPI presseces) #SBATCH --cpus-per-task=20 # Number of processors per task OpenMP threads() #SBATCH --gres=mic:1 # Number of Co-Processors module load intel-psxe/2015-update1 module load intel/mic/sdk/3.3 module load intel/mic/runtime/3.3 export OMP_NUM_THREADS=$SLURM_CPUS_PER_TASK export MIC_ENV_PREFIX=MIC export MIC_OMP_NUM_THREADS=120 export MIC_KMP_AFFINITY=scatter ./helloflops3offload

Programming Considerations

As we've seen, getting code to execute on the Xeon Phi Coprocessor can be accomplished in a manner of minutes or hours. However, getting production code to run optimally on the MICs is often an effort spanning weeks or months. A few of the key considerations that can speed up your code are:

- Cache alignment

- Vectorization

- Blocking

- Minimal data transfer

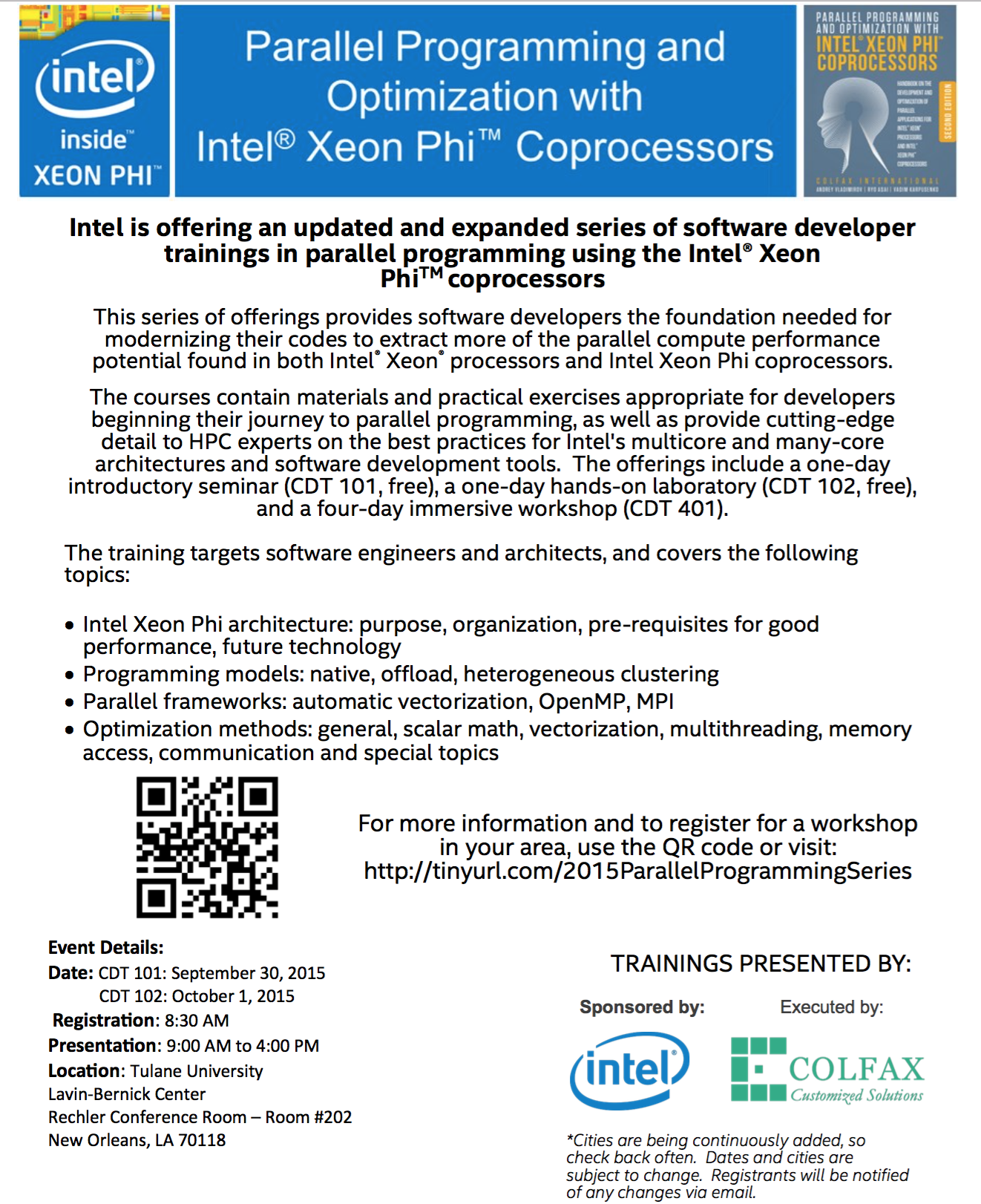

Future Training

We've only scratched the surface on the potential of the Xeon Phi coprocessor. If you are interested in learning more, Colfax International will be giving two days of instruction on coding for the Xeon Phi at Tulane at the end of September. Interested parties can register at

CDT 101: http://events.r20.constantcontact.com/register/event?oeidk=a07eayq4gvha16a1237&llr=kpiwi7pab

CDT 102: http://events.r20.constantcontact.com/register/event?oeidk=a07eayqb5mwf5397895&llr=kpiwi7pab

Attachments (2)

- ColfaxInvite.png (1.2 MB ) - added by 10 years ago.

- xeonPhi.jpg (23.4 KB ) - added by 10 years ago.

Download all attachments as: .zip